1. Introduction

In

![]() Checkmk Ultimate

you can receive OpenTelemetry metrics and process them in monitoring.

For this purpose, Checkmk includes an OpenTelemetry Collector.

This supports the reception of OTLP data via the transport protocols GRPC and HTTP(S) (push configuration).

It can also act as a scraper to collect data from Prometheus endpoints (pull configuration).

Checkmk Ultimate

you can receive OpenTelemetry metrics and process them in monitoring.

For this purpose, Checkmk includes an OpenTelemetry Collector.

This supports the reception of OTLP data via the transport protocols GRPC and HTTP(S) (push configuration).

It can also act as a scraper to collect data from Prometheus endpoints (pull configuration).

Support for OpenTelemetry data types (signals) is as follows:

Checkmk provides extensive support for metrics; these can be flexibly assigned to existing objects in monitoring or used to create custom objects.

Logs are partially supported — they can be forwarded to the Event Console and processed there.

Traces are currently not supported by Checkmk.

This article describes the status of the OpenTelemetry integration as of the release of Checkmk 2.5.0b4. We are continuing to work on incorporating changes made since then. Please refer to the OpenTelemetry-related Werks to find out the current status of the Checkmk version you are using. |

2. Setup

Before enabling the OpenTelemetry Collector, you should already have enabled one or both of the following components to avoid errors:

The metrics backend, if you want to display OpenTelemetry metrics in monitoring

The Event Console with a Syslog receiver, if you want to process OpenTelemetry logs

You can enable both of these in the Global settings. In both cases, the default settings are sufficient for first use.

2.1. Activating the OpenTelemetry Collector

To process OpenTelemetry data, you must activate the collector in Global settings > Telemetry. You can also set the memory limit for the collector in the global settings. This ensures that large volumes of incoming data only overload the OpenTelemetry Collector, not your entire Checkmk system.

The collector starts automatically after you have saved the settings.

2.2. Setting up the OpenTelemetry Collector (passive)

To set up the collector, navigate to Setup > Telemetry > OpenTelemetry Collector if the collector is to operate in passive mode and receive data. Click the Add OpenTelemetry Collector configuration button to start configuring a collector. In the General properties, assign a name for the new collector.

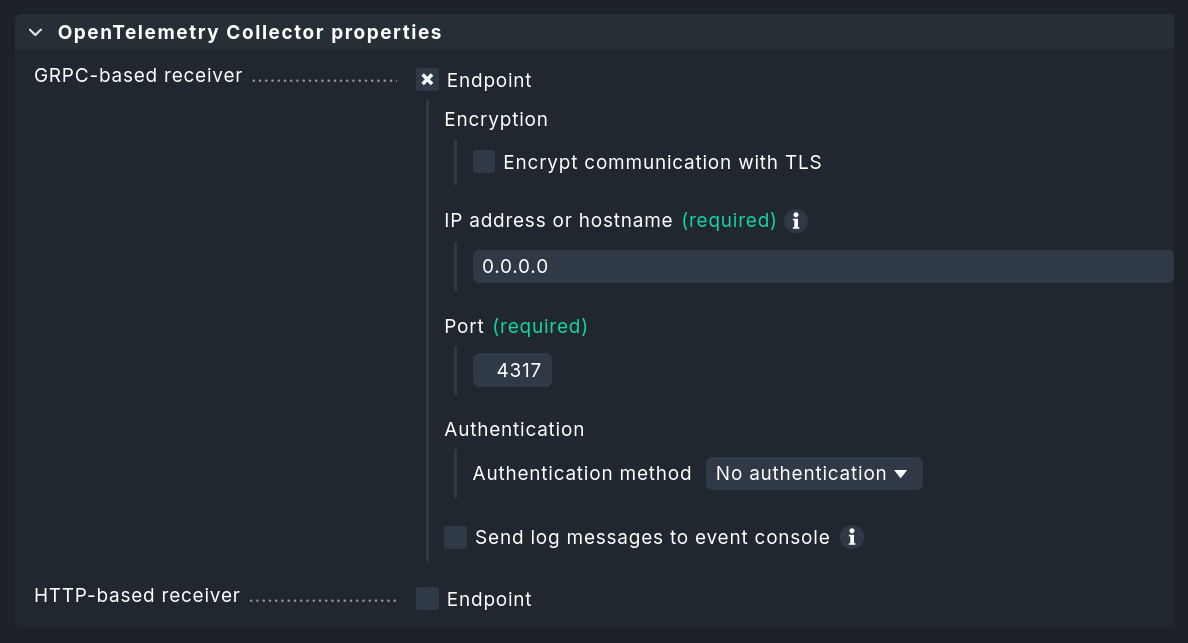

In the collector’s properties, you must configure at least one of the two push endpoints (via GRPC or HTTP). There, you then define the IP addresses and ports to be used. You also specify whether authentication and encryption are enabled. Note: If you activate Basic authentication, TLS encryption must be activated, since user name and password are transmitted in plain text and can be easily intercepted.

In many cases, applications sending OpenTelemetry data will refuse to communicate with TLS-encrypted collectors if the certificate used does not include the host name to that the sender is attempting to communicate. Navigate to Global settings and edit the option Site certificate subject alternative names to specify all the host names for which the server certificate should be valid. |

Sending logs to the Event Console

OpenTelemetry also provides ways to transmit log messages. The collector can forward these in Syslog format to any applications capable of receiving Syslog messages. This enables the processing of OpenTelemetry logs by the Event Console in Checkmk. You can enable this feature using the Send log messages to event console checkbox.

A Resource attribute for host name lookup is absolutely required.

In the simplest case, this is service.name, i.e., the name of the OpenTelemetry service, which in many cases can be easily mapped to Checkmk hosts.

Click Save to save the collector.

2.3. Setting up the Prometheus scraper (active)

If you want the collector to behave actively — that is, to query Prometheus endpoints — use Setup > Telemetry > Prometheus scraper. Click the Add Prometheus scraper configuration button to start setting up a new scraper.

The Job name specified here is converted by the OpenTelemetry Collector to the service.name attribute.

In many cases, you will later want to assign this to a host name.

The other parameters specify access to the scraping endpoint:

For example, an endpoint accessible at http://198.51.100.252:9100/metrics results in setting IP address or host name to 198.51.100.252, Port to 9100, and Metrics path to /metrics.

For unencrypted HTTP, leave the checkbox next to Encrypt communication with TLS unchecked.

3. Sending test data to the collector

You’ll likely want to set up the OpenTelemetry Collector for Checkmk because somewhere in your IT environment, something is already generating some OpenTelemetry metrics. If this is not (yet) the case, or if you simply want to try out the setup on a test system, we present two quick-to-set-up sample applications in this chapter. With the setup completed so far, the received data ends up in the metrics backend — where you can verify that data is arriving, for example, in the SQL console. In the following steps, you can use the data directly in monitoring.

We provide the simplest example application in our GitHub repository as 'Hello metric'. This runs in a virtual Python environment and creates an OpenTelemetry service with an OpenTelemetry metric. Notes on required Python modules and how to get started can be found in the repository.

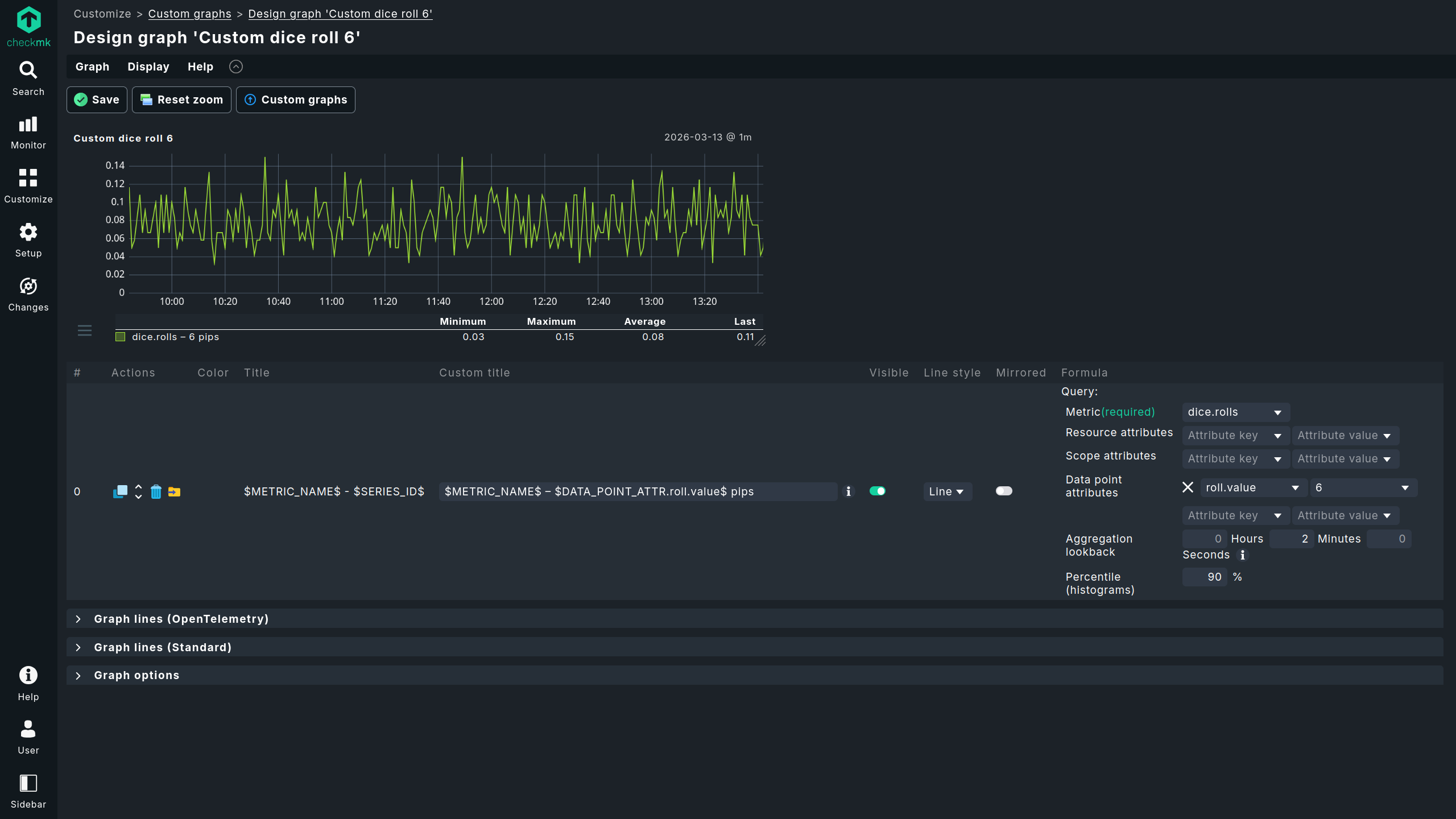

The 'Dice Roll' sample application featured on the OpenTelemetry website works very similarly to 'Hello metric'.

This sample application is more practical than 'Hello metric' in many respects.

For example, 'Dice Roll' sends OpenTelemetry metrics every time the application server is called (and only then!) and introduces the 'Counter' data type.

You can use the virtual Python environment created for the 'Hello metric' example to try out the 'Dice Roll' example as well.

However, to do so, you must also install the Python module flask (pip install flask) and create the app.py file in the version extended with metrics, as described in this guide.

In the following call, replace the Checkmk server as the target (here 198.51.100.42 with GRPC port 4317):

Your Flask server is now ready and can be accessed via the selected port (in this case, 8080) to roll a die with each request.

You can view the die roll data, for example, by running curl -v http://localhost:8080/rolldice.

Each die roll you trigger in this way is transmitted to Checkmk as a data point.

4. OpenTelemetry data in monitoring

There are three main ways to make use of incoming data in monitoring. This chapter introduces these three options. The scope of the generated services and visible metrics, as well as the flexibility and system load, differ across all of the methods. For this reason, it is important to choose the appropriate monitoring method for each application scenario.

The custom graphs visualize data directly from the metrics backend in dashboards — without going through services and without even involving the monitoring core. You can create rules for the special agent described in the following section directly from custom graphs.

A special agent for selected metrics is useful when you want to assign some data points received via OpenTelemetry to existing or newly created hosts, and are looking for a way to map data from different sources to the hosts to which they belong organizationally or technically. The overhead of this method is slightly higher, which is usually offset by the fact that a smaller volume of data is queried.

Using dynamic host management is ideal when it is possible to precisely map OpenTelemetry services to Checkmk hosts and OpenTelemetry metrics to Checkmk services. Since a dedicated fetcher is used and data therefore takes a very direct path, this approach is highly efficient.

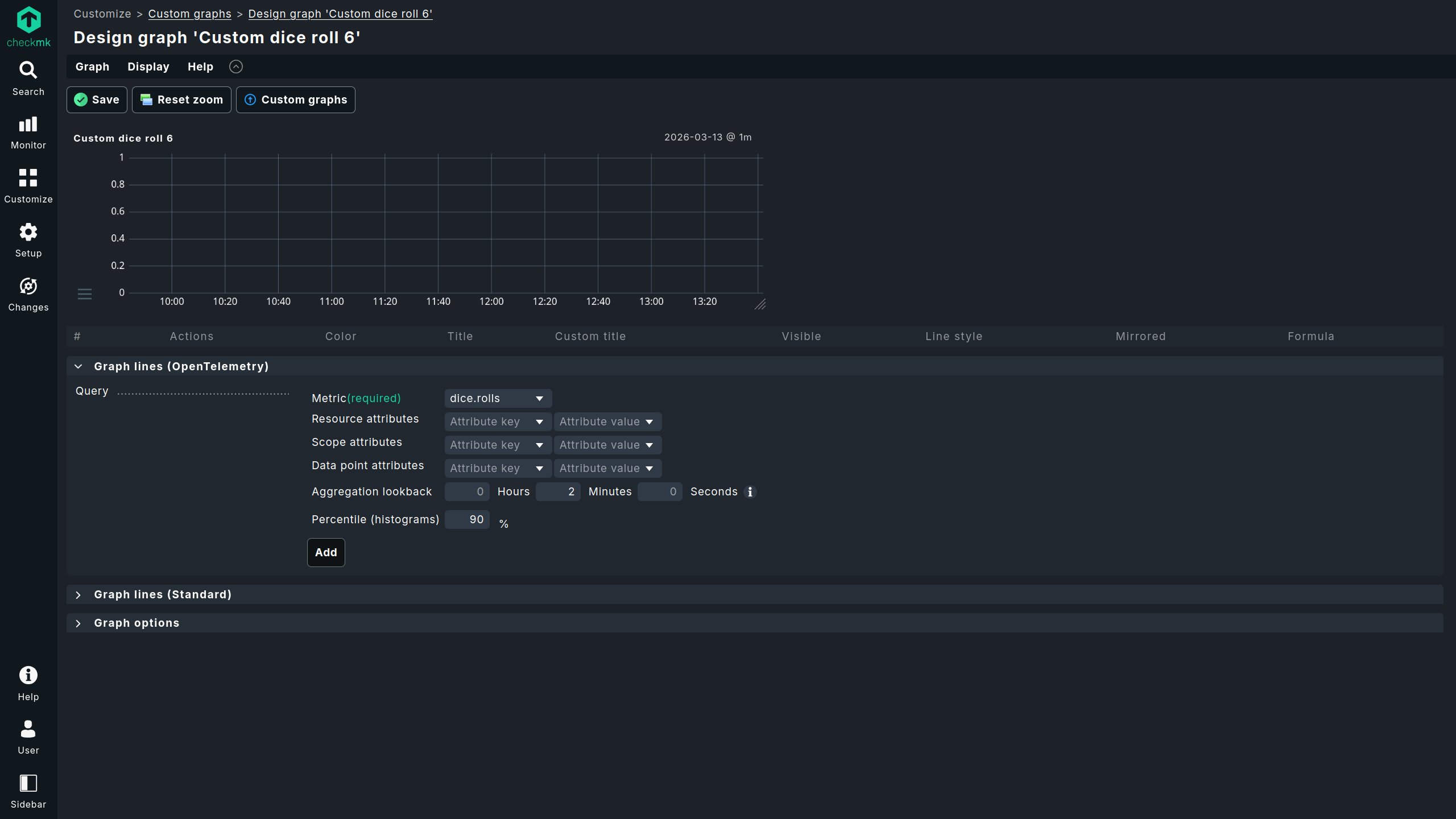

4.1. Creating custom graphs

Under Customize > Custom graphs, you can create a custom graph. The process differs slightly in some respects from graphs created from metrics stored in RRDs. For more details, see the article on metrics and graphing.

Once you have narrowed down the OpenTelemetry data points displayed in the graph sufficiently using the available attribute filters, you can use the export icon to export the created filters as filters for a special agent, which we will introduce in the following section.

The two most noticeable differences between custom graphs created from RRDs and those created from the metrics backend are:

The resolution: for RRDs, after two days the resolution is typically reduced to ten minutes; in the metrics backend, however, there is no change in resolution.

The retention period: for RRDs, the retention period is typically four years (configurable); for the metrics backend, it is two weeks (currently not configurable).

4.2. Assigning custom metrics (custom queries) to any host

As an alternative to the method via Customize > Custom graphs, you can configure the special agent under Setup > Agents > Other integrations > Metric backend (custom query), which makes individual metrics from the metrics backend available as Checkmk services. Under this menu item, you can also access configurations created from custom graphs for modifications with the special agent — with custom graphs only the creation of new configurations is possible. You can assign a custom metric to any host.

Custom metrics created from custom graphs are limited to a single OpenTelemetry metric as a source. If you need multiple OpenTelemetry metrics as sources, you must use the rules for configuring the special agent described in this section to add additional ones. If you create multiple rules for special agents from custom graphs, only the first matching rule will be executed. |

Since the metrics generated by the special agent follow the 'classic' path through the monitoring core and RRDs, they are not affected by the restrictions in distributed monitoring. You also have extensive options for naming; for example, you can combine free text with various macros, such as the original OpenTelemetry metric name.

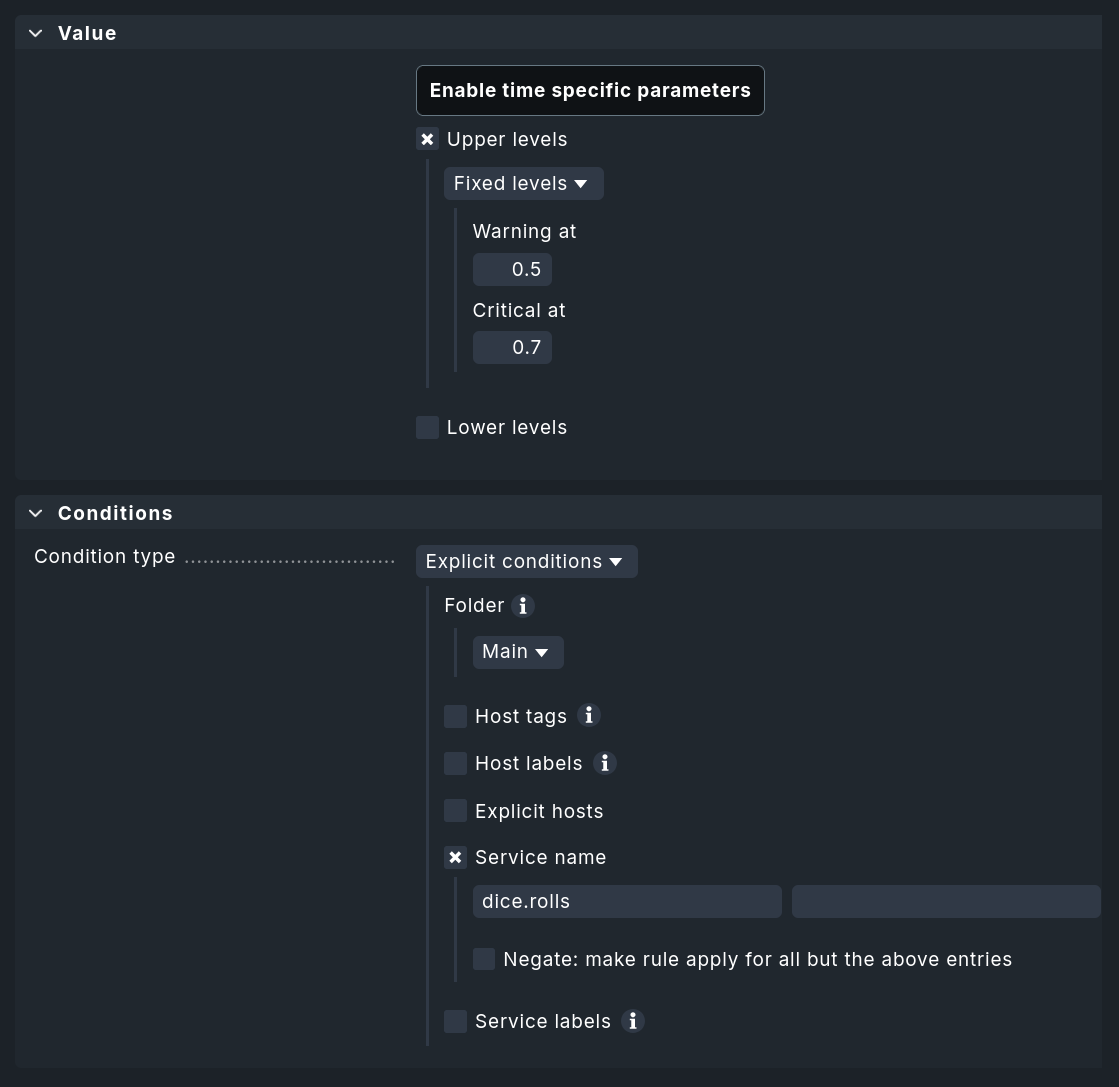

Configure thresholds

To assign thresholds to these custom services, you can create rules via Setup > Services > Service monitoring rules > Metric backend (custom query). The assignment follows the standard convention: You can define upper and lower thresholds for WARN and CRIT. The conditions for hosts and the matching of service names also correspond to what you are familiar with from other parts of Checkmk.

4.3. Setting up dynamic host management

To automatically create hosts, set up a new OpenTelemetry connection with dynamic host management. As a preliminary step, (at least for initial testing) we recommend creating a separate folder where the automatically-generated OpenTelemetry hosts will be stored.

You can set up the new connection for dynamic host management under Setup > Hosts > Dynamic host management > Add connection:

In the Connection properties section, configure at least the following settings:

Set Connector type to OpenTelemetry data - Metric backend.

Next, define the Attribute filters, which you use to preselect the criteria for creating hosts. These can be left blank. A value must be entered only for Resource attribute for hostname lookup.

In the example, the folder

OpenTelemetryTestis used as the target folder for the new hosts (Create hosts in).Select the Host attributes to set according to your system environment. In most cases, the hosts generated from Resource attributes will be virtual and used only in the context of OpenTelemetry. Consequently, you can generally use only special agents as monitoring agents (Configured API integrations, no Checkmk agent) and leave the settings for IP addresses set to No IP.

Since many hosts can sometimes be created (and deleted) in dynamic environments, you should set appropriate values for the retention period of deleted hosts and the maximum number of hosts retained by this OpenTelemetry connection.

For more information on the other parameters, see the article on dynamic host management.

Save the new connection using Save and activate the changes.

Once the dynamic host management has been set up, the changes have been activated, and OpenTelemetry data is being received, hosts with the expected services will appear automatically. Configuring a special agent is no longer necessary: Instead of the long processing chain involving special agents and check plug-in, special fetchers now query the metrics backend and pass Checkmk metrics and service states directly to the monitoring core.

Configuring thresholds

You can set thresholds, and more, for services using the Setup > Services > Service monitoring rules > OpenTelemetry rule.

Here, you can define rules for individual metrics or all metrics for a service.

At this point, the configuration options are significantly greater than for custom metrics created via a Custom query.

The example screenshot shows the selection of the counter shown above for the OpenTelemetry metric dice.rolls.

Depending on the data type returned by the OpenTelemetry data point, it may be useful to calculate rates. This is useful for counters — such as those for dropped network packets. If multiple sources provide data assigned to the same Checkmk service, you can also specify whether an aggregation (such as a sum or average) should be performed, or whether the most recent, largest, or smallest data points within an interval should take precedence.